But how do you know that the data that you’re collecting from students is valid and reliable? How do you know that the data isn't reflecting students' momentary moods?

In this episode of Research Minute, Dr. Tara Chiatovich, data scientist at Panorama, breaks down how to check for validity and reliability in a student survey. She shares three characteristics you can look for to ensure that your survey minimizes measurement error and produces data that educators can trust.

Here are three hallmarks of a research-backed student survey:

1. The items in survey topics “hang together” well.

First, it's important that the items—or questions—in a specific survey topic, such as Growth Mindset or School Climate, "hang together" well. To check on this, we use a statistic called Cronbach’s alpha. A high Cronbach’s alpha shows that the items that make up a topic really are tapping into the same topic. This in turn tells us that the topic is reliable.

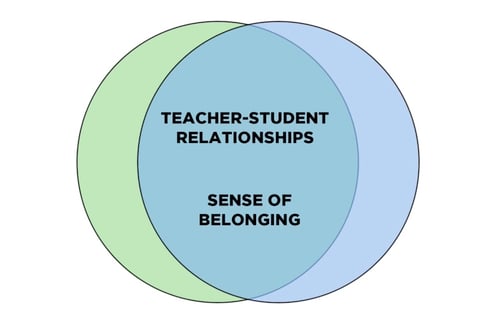

2. The correlations among survey topics are larger for more-related topics and smaller for less-related topics.

This point refers to convergent and discriminant validity. Convergent validity is when topics that are similar to each other, such as Teacher-Student Relationships and Sense of Belonging, have relatively strong correlations with each other.

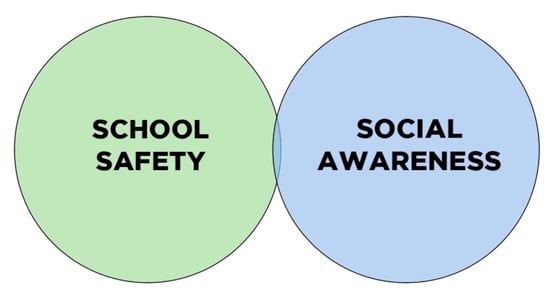

On the other hand, discriminant validity is when topics that are relatively unrelated—such as School Safety and Social Awareness—show small or no correlations to each other.

3. The survey is designed using best practices with checking and testing built in.

Our third and final point concerns all of the hard work that goes into creating the survey. Instead of just checking for reliability and validity at the end, a well-designed survey builds in several checks as it is being created. These steps include:

- Interviews and focus groups: Researchers conduct interviews and focus groups with students to understand how they think of the topics on the survey; are the scales relevant, useful, and discernible to the target respondent group?

- Item development best practices: Researchers follow survey design best practices to guide question writing—including wording survey items as questions rather than statements and avoiding "agree-disagree" response options.

- Expert review: Researchers collect feedback from subject matter experts with an eye toward what’s missing and what may prove challenging

- Cognitive pretesting: Students from diverse backgrounds restate the survey items aloud in their own words and answer the item in a "stream of consciousness" way.

We’ve just reviewed what to look for in a student survey to ensure that it doesn't reflect students’ momentary moods. However, keep in mind that no survey is valid and reliable in all situations. It's important to consider who the survey was designed for and to make clear to students that there are no right or wrong answers.

At Panorama, we take pride in the thought we put into developing our student surveys. High-quality surveys lead to high-quality data, and high-quality data will better equip schools and districts to take action.

Click here to learn more about bringing the Panorama Student Survey to your school or district.

.jpg)